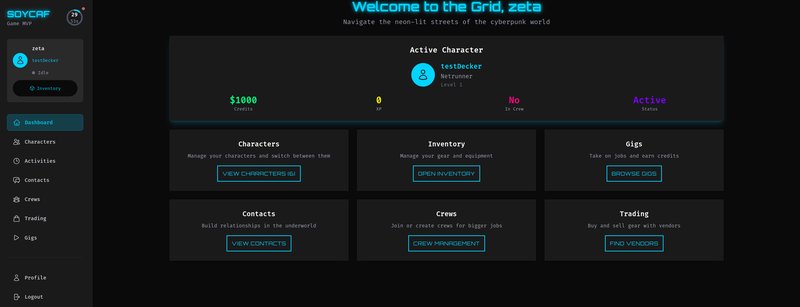

Overview

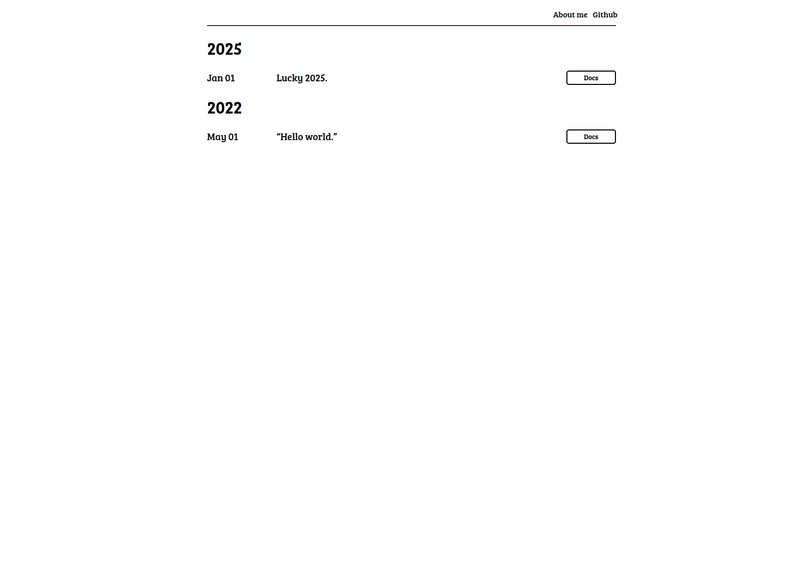

Ziegel.me is a creative, experimental personal website that reimagines the portfolio concept through a retro 2000s desktop interface. Built with Vue.js, the site functions as both a showcase of system projects and a playful exploration of nostalgic web design.

The website is currently at v0.2.0 with ongoing development toward public release at v0.3.0.

Creative Concept

Design Philosophy

Rather than a traditional static portfolio, Ziegel.me presents a working desktop environment where visitors explore projects, services, and information through interactive desktop elements.

Inspiration

- Windows 95/98/XP aesthetic

- Early 2000s web design nostalgia

- Interactive desktop metaphor

- Playful user experience

Features

Desktop Interface Elements

- Taskbar: Navigation and status information

- Desktop Icons: Clickable shortcuts to sections

- Windows: Draggable content windows

- System Tray: Status widgets and notifications

- Start Menu: Application launcher (planned)

- Wallpapers: Seasonal and themed backgrounds

Core Sections

- Projects Showcase: Displays system projects and accomplishments

- Services Directory: Links to hosted services (media, org, dev stacks)

- System Status: Real-time monitoring widgets

- Config Panel: Personalization settings (theme, appearance)

- Pseudo Login: Fictional user authentication (for atmosphere)

- About: Information about the creator and philosophy

Interactive Features

- Draggable Windows: Move content windows around desktop

- Resizable Panels: Adjust window sizes

- Theme Customization: Color schemes and appearance options

- Status Widgets: Live information displays

- Seasonal Effects: Special visuals for holidays/seasons

- Sound Effects: (Optional) Nostalgic UI sounds

Services Integration

Links to hosted services:

- Media Stack: Jellyfin, Calibre, music

- Org Stack: WikiJS, Bitwarden, RSS

- Dev Stack: Gitea, Jenkins, n8n

- Blog: Klinkerlitzchen blog

Technical Architecture

Frontend Stack

Vue.js Application

├── Component Library

│ ├── DesktopWindow

│ ├── Taskbar

│ ├── StartMenu

│ ├── StatusWidget

│ └── Icon

├── State Management

│ ├── Vue Context/Composition API

│ └── Project/Service Data

├── Styling

│ ├── CSS Modules

│ ├── CSS Grid for desktop layout

│ └── Responsive breakpoints

└── Routing

├── Project Views

├── Service Views

└── Settings/Config

Build & Deployment

- Build Tool: Webpack/Vite for bundling

- Development: Hot module replacement for fast development

- Production Build: Optimized bundle with minification

- Deployment: Docker container on VPS

- Reverse Proxy: Traefik for HTTPS

- Blog Integration: Hugo static blog linked/embedded

- Code Splitting: Lazy load project and service pages

- Image Optimization: Compress and optimize all assets

- Bundle Analysis: Monitor bundle size

- Caching: Static asset caching strategies

- CDN: Optional CDN for global distribution

Design Challenges & Solutions

Solution: Classic visual style with modern web technologies underneath

Solution: Intuitive navigation combined with desktop familiarity

Challenge: Mobile Responsiveness

Solution: Graceful degradation for small screens, grid-based responsive layout

Challenge: Accessibility in Creative Design

Solution: Semantic HTML, ARIA labels, keyboard navigation support

Current Development Status

🔄 In Progress - Version 0.2.0 approaching completion

Completed (v0.2.0)

- Core Vue.js architecture

- Desktop interface components

- Project showcase functionality

- Service directory

- Basic styling and theming

- Responsive layout

In Development (v0.2.0 → v0.3.0)

- Top menu/navigation refinement

- Status widget implementations

- Seasonal effects

- Performance optimization

- Accessibility improvements

- Blog integration

Planned (v0.3.0+)

- Pseudo-login system

- Advanced config panel

- Real-time service status

- Community features

- Advanced seasonal effects

- SEO optimization

- Analytics integration

Milestones

Skills Demonstrated

Frontend Development

- Vue.js component architecture

- Single-page application design

- State management without Redux

- CSS Grid and layout techniques

- Responsive design

- Component composition

Creative Web Design

- Nostalgia-driven design

- User experience through metaphor

- Visual hierarchy and aesthetics

- Color theory and theming

- Interactive design patterns

- Bundle size optimization

- Code splitting

- Asset optimization

- Caching strategies

- Performance monitoring

Accessibility

- WCAG compliance

- Semantic HTML

- Keyboard navigation

- Screen reader support

- Color contrast

Timeline & Effort

- v0.2.0: 10-15 hours remaining

- v0.3.0 (Public): 10-15 hours

- Total estimate: 20-40 hours to full release

Success Criteria

- ✅ Functional, usable desktop interface

- ✅ All projects properly showcased

- ✅ Mobile-responsive experience

- ✅ Fast load times and good performance

- ✅ Accessibility standards met

- ✅ Positive user feedback

Unique Value

Ziegel.me demonstrates:

- Creative thinking in portfolio design

- Modern technical skills (Vue.js)

- Understanding of user experience

- Attention to detail and polish

- Ability to execute ambitious designs

- Balance between aesthetics and functionality

This project stands out in a portfolio by showing personality and creativity beyond straightforward technical projects.

Team

Primary Owner: Ziegel (all collaborators)

This is a team-wide project representing the creative vision of the collective.

Future Vision

Ziegel.me could evolve into:

- A template or theme for others

- A design inspiration for retro web projects

- A community platform using the desktop metaphor

- A game-like experience for system exploration

- A reference for creative portfolio design

Overview

Fenja-Frings.de is a modern personal portfolio website serving as the professional home page for Jemar/Fenja Frings. It showcases projects, skills, experience, and serves as a central hub for professional identity online.

Built with Hugo and JAMstack principles, the site is fast, secure, scalable, and optimized for search engines—demonstrating modern web development best practices.

Purpose & Goals

Primary Goals

- Showcase Professional Work: Display completed projects and accomplishments

- Demonstrate Technical Skills: Highlight technical expertise and knowledge

- Professional Presence: Establish credibility and professional identity

- Attract Opportunities: Generate interest from potential clients or employers

- Share Knowledge: Blog integration for thought leadership and content sharing

Design Philosophy

- Performance First: Fast-loading static site

- Accessibility: WCAG-compliant design

- Mobile-First: Responsive across all devices

- SEO Optimized: Proper metadata and structured data

- Easy to Update: Content-driven with Hugo front matter

Features

Portfolio Section

- Project showcase with detailed descriptions

- Technology stack highlighting

- Links to live demos and source code

- Project metrics and impact information

- Filtering and categorization

Professional Profile

- CV / Resume: Downloadable professional CV

- Skills Matrix: Organized display of technical and soft skills

- Experience Timeline: Career history and roles

- Certifications: Professional certifications and achievements

Blog Integration

- Thought leadership articles

- Technical how-to posts

- Project deep-dives

- Community engagement

About Section

- Professional biography

- Personal interests and hobbies

- Team/collaborators information

- Community involvement

- Contact form

- Social media links

- Email subscription

- Newsletter signup

Technical Stack

Static Site Generation

- Framework: Hugo (Go-based static site generator)

- Build: Fast incremental builds

- Templating: Hugo templates with Golang syntax

- Content: Markdown with YAML frontmatter

Frontend

- HTML/CSS: Custom semantic markup

- Responsive Design: Mobile-first CSS approach

- Accessibility: WCAG 2.1 Level AA compliance

- Performance: Optimized images, lazy loading

- Fonts: System fonts or selective web fonts

Build & Deployment

- Containerization: Docker for consistent builds

- Build Pipeline: GitHub Actions or similar CI/CD

- Deployment: VPS deployment with static file serving

- Hosting: Personal infrastructure or managed VPS

- SSL/TLS: HTTPS with automatic certificate management

- DNS: Custom domain management (fenja-frings.de)

- Static file serving (no server-side processing)

- Content compression (gzip/brotli)

- Image optimization

- Caching strategies

- CDN integration (optional)

Architecture

Directory Structure

fenja-frings-website/

├── content/

│ ├── portfolio/ # Portfolio projects

│ ├── about/ # About page

│ ├── blog/ # Blog posts

│ └── _index.md # Home page

├── static/

│ ├── images/ # Images and media

│ ├── css/ # Custom stylesheets

│ └── js/ # Client-side JavaScript

├── layouts/

│ ├── _default/ # Default layouts

│ ├── portfolio/ # Portfolio-specific layouts

│ └── partials/ # Reusable components

├── config.toml # Site configuration

└── themes/ # Hugo theme (custom or third-party)

Build Process

Source Files (Markdown, YAML)

↓

Hugo Build

↓

Static HTML/CSS/JS

↓

Docker Image

↓

VPS Deployment

↓

Reverse Proxy (Traefik)

↓

HTTPS + CDN (optional)

↓

Public Website

Content Structure

Portfolio Projects

Each project includes:

- Title and summary

- Detailed description

- Technology stack

- Links (GitHub, demo, documentation)

- Project status

- Effort and timeline

- Key learnings

Blog Posts

- Technical articles

- Project retrospectives

- Learning documentation

- Thought leadership

- Community contributions

Skills & Experience

- Technical skills categorized by domain

- Proficiency levels

- Years of experience

- Certifications

- Tools and technologies

SEO & Discoverability

On-Page SEO

- Semantic HTML structure

- Proper heading hierarchy

- Meta descriptions

- Open Graph tags

- Twitter Card tags

- JSON-LD structured data

Technical SEO

- XML sitemaps

- Robots.txt

- Fast page load times

- Mobile responsiveness

- Clean URL structure

- Internal linking strategy

Content SEO

- Keyword optimization

- Quality content

- Regular updates

- Comprehensive coverage

- Unique perspectives

Deployment

Infrastructure

- VPS: Rented or personal server

- Web Server: Nginx or similar for static files

- Reverse Proxy: Traefik for HTTPS/routing

- Domain: fenja-frings.de with proper DNS configuration

- Monitoring: Integrated with homelab monitoring

Deployment Pipeline

Git Commit

↓

GitHub Actions Trigger

↓

Hugo Build

↓

Image Optimization

↓

Docker Build

↓

Push to Registry

↓

VPS Deployment

↓

Verify Health Check

↓

Announce Update

Continuous Deployment

- Automated builds on git push

- Staging environment for preview

- Blue-green deployment

- Easy rollback capability

Skills Demonstrated

Web Development

- Static site generation with Hugo

- JAMstack architecture

- Responsive design

- HTML/CSS/JavaScript

- SEO best practices

DevOps

- Docker containerization

- CI/CD pipeline setup

- VPS deployment

- SSL/TLS management

- DNS configuration

Content Creation

- Professional writing

- Technical documentation

- Portfolio presentation

- SEO-aware content

Design Thinking

- User experience design

- Information architecture

- Visual hierarchy

- Accessibility considerations

Timeline & Status

🔄 In Progress

- Planning: Complete

- Design: In progress

- Hugo Setup: Complete

- Content Creation: In progress

- Deployment: Ready for launch

- Estimated Completion: 2025-12-31

Milestones

- Page Load Time: < 1 second (3G)

- Lighthouse Score: 90+ across all metrics

- Accessibility: WCAG 2.1 AA

- SEO Score: 95+

- Uptime: 99.9%

Future Enhancements

- Newsletter subscription integration

- Comments system (Disqus or custom)

- Dark mode theme toggle

- Multi-language support (i18n)

- Advanced analytics

- Dynamic portfolio filtering

- Search functionality

- Automated blog updates from other platforms

Success Metrics

- ✅ Website launches at fenja-frings.de

- ✅ All portfolio projects documented

- ✅ High performance scores

- ✅ Good search engine visibility

- ✅ Professional appearance

- ✅ Easy to maintain and update

- ✅ Drives inbound opportunities

This website project demonstrates the ability to create production-ready web properties with modern best practices, from conception through deployment and maintenance.

Overview

PyRunner (originally named pySchieber) is a specialized web application for organizing and managing Shadowrun tabletop RPG campaigns. It serves as a collaborative tool for game masters and players to track game state, NPC information, locations, and mechanical game systems.

The project is deployed and live at soycaf.net, featuring comprehensive game management capabilities tailored to the Shadowrun system.

Key Features

Campaign Management

- Actions & Results Tracking: Record player actions and outcomes for each session

- NPC Database: Maintain detailed records of non-player characters with attributes and relationships

- Location Registry: Track important locations with descriptions and connections

- Items & Equipment: Catalog weapons, gear, and valuable items with properties

Game System Implementation

- Shadowrun Mechanics: Native support for Shadowrun-specific game rules

- Skill Checks: Automated skill check calculations and result tracking

- Stocks System: Track in-game assets, money, and resources

- Tick System: Time management for initiative and action sequences

- Gang and Corp Management: Track rival organizations and their properties

Session Organization

- Structured Data Storage: Organized database for all game elements

- Multiple Campaign Support: Manage multiple concurrent campaigns

- Player Collaboration: Shared view for players and game masters

Technical Implementation

Architecture

- Framework: Flask for lightweight, flexible web application development

- Templating: Jinja2 for dynamic HTML generation

- Frontend: HTML/CSS for responsive user interface

- Database: SQLite for development, PostgreSQL for production deployment

- Containerization: Docker for consistent deployment

Key Design Decisions

- Modular design with separate modules for game mechanics

- Clean separation between game logic and presentation

- Extensible architecture to support multiple game systems

- RESTful API endpoints for data management

Database Schema

Normalized relational database with tables for:

- Campaigns

- Characters & NPCs

- Locations

- Items & Equipment

- Actions & Results

- Game mechanics state

Skills Demonstrated

- Flask Framework: Building feature-rich web applications

- Python Development: Modular, well-structured code

- Database Design: Relational database schema for complex domains

- Jinja2 Templating: Dynamic template rendering

- Game System Knowledge: Deep understanding of Shadowrun mechanics

- Web Application Architecture: Multi-user web app design

- Docker Deployment: Containerized production deployment

- Domain-Driven Design: Organizing complex game system logic

Challenges & Solutions

Challenge: Representing complex Shadowrun mechanics in a web interface

Solution: Modular system design with game-specific modules for each mechanic

Challenge: Supporting multiple concurrent campaigns

Solution: Campaign-scoped database queries and session management

Current Status

🔄 In Progress - Core functionality implemented and deployed, ongoing feature additions for expanded game system support

Deployment

- Live at: soycaf.net

- Container: Docker deployment for easy scaling

- Hosting: Personal VPS infrastructure

- Monitoring: Integrated with homelab monitoring stack

Future Improvements

- GraphQL API for more flexible client queries

- Real-time collaboration features using WebSockets

- Mobile-optimized interface

- Character sheet export/import

- Automated backup and recovery systems

Overview

Spirits Primal is an ambitious game development project building a web-based game inspired by the beloved classic Spirits Online. Rather than recreating the original, this project tells the prequel story—how the players established and built the spirits world.

This is a complex, creative project combining game development, narrative design, artistic creation, and web technologies.

Project Vision

Core Concept

Spirits Primal reimagines the Spirits Online universe as a foundational world-building game. Players take on the role of world architects, establishing the fundamental systems, creatures, and societies that would eventually become the spirits world.

Narrative Framework

The game tells the creation story of the spirits world:

- How did the spirits come into existence?

- What were the first civilizations and conflicts?

- How did the world’s mechanics and rules form?

- What is the history before the original Spirits Online timeline?

Gameplay Philosophy

- Collaborative World Building: Players collectively shape the world

- Emergent Narrative: Stories emerge from player actions and interactions

- Systems-Driven Design: Focus on mechanics that create interesting interactions

- Creative Expression: Opportunities for artistic and narrative creativity

Game Design

Design Goals

- Create a presentable playable prototype

- Document comprehensive game mechanics in a design document

- Gain approval from Yhoko (original Spirits Online creator)

- Create original artwork and assets

Core Mechanics

Civilization Building

- Players establish and manage civilizations

- Develop technology trees and infrastructure

- Create unique culture and identity

- Form alliances or conflicts with other civilizations

Spiritual Systems

- Create and customize spirit creatures

- Establish spiritual hierarchies and relationships

- Develop magical systems and abilities

- Create unique spirit abilities and properties

World Dynamics

- Environmental progression and evolution

- Climate and geography interactions

- Resource systems and trade

- Political and social systems

Type System

Reference: Type System.md (detailed mechanics)

- Classification system for spirits and creatures

- Type interactions and relationships

- Power balancing through type system

- Strategic depth through type matchups

Player Experience

Early Game:

- Tutorial on core mechanics

- Establishing first civilization

- Creating initial spirit creatures

- Learning world systems

Mid Game:

- Expanding civilization influence

- Building alliances and conflicts

- Developing unique abilities and systems

- Creating cultural identity

Late Game:

- Complex civilization interactions

- Emergent stories from player actions

- World-wide political systems

- Establishing legacy

Technical Implementation

Technology Considerations

Game Engine Options

- Phaser: 2D-focused, good for isometric/tile-based games

- Babylon.js: 3D-capable, good for richer visuals

- Three.js: Flexible 3D graphics library

- Custom WebGL: Maximum control, higher complexity

Architecture

Client (Browser)

├── Game Rendering Engine

├── Game Logic & Simulation

├── UI Layer

└── Network Client

Server (Optional for multiplayer)

├── Game State Management

├── Physics/Simulation

├── Persistence Layer

└── Multiplayer Synchronization

Development Stack

- Language: JavaScript/TypeScript for type safety

- Build Tools: Webpack/Vite for bundling

- State Management: Redux or similar for game state

- Networking: WebSockets for multiplayer (if included)

- Art Pipeline: Aseprite or similar for pixel art

- Audio: Web Audio API for game sound

World Building Resources

Reference Games for Study

- WorldBox: Sandbox world building game

- Eco: Civilization and ecology simulation

- Original Spirits Online: Mechanics and setting reference

Design Inspiration

- Reddit Worldbuilding community discussions

- Game Mechanics Wiki research

- Original Spirits Online lore

Project Structure

Milestones

Current Phase

🔄 Early Development - Design phase and prototype planning

Team & Roles

Team Members:

- JA: Project coordination and design

- Jemar: Web development and architecture

- Tess: Game design and mechanics

- Zeta: Audio and creative direction

No fixed deadline or budget - passion project with flexible scope

Skills Demonstrated

Game Development

- Game design and mechanics creation

- Narrative design and world-building

- Player experience design

- Game balance and systems thinking

- Prototyping and iteration

Web Development

- JavaScript/TypeScript

- Game engine integration

- Web audio and graphics APIs

- Performance optimization for games

- Multiplayer network architecture

Creative Skills

- World-building and lore creation

- Narrative design

- Visual and audio design direction

- Community collaboration

Project Management

- Cross-functional team coordination

- Long-term creative project planning

- Iterative development

- Community engagement

Challenges & Approach

Challenge: Capturing the essence of Spirits Online while creating something new

Approach: Deep study of original game, community feedback, iterative design

Challenge: Coordinating creative vision across distributed team

Approach: Comprehensive design documents, regular team syncs, shared vision statements

Challenge: Managing scope of complex game systems

Approach: MVP-first approach, modular design, feature prioritization

This project aims to:

- Honor the original Spirits Online community

- Create a new experience in the shared universe

- Build creative tools for collaborative world-building

- Establish a foundation for community-driven narrative

Current Status

📋 Design & Planning Phase

- Core concept established

- Team assembled with complementary skills

- Reference materials and inspiration collected

- Beginning detailed design document

- Planning initial prototype scope

This is a long-term creative project with no fixed deadline, allowing for thoughtful design and high-quality execution.

Success Metrics

- ✅ Functional game prototype demonstrating core mechanics

- ✅ Detailed design document that captures the vision

- ✅ Original artwork that establishes unique visual identity

- ✅ Community approval and support from Spirits Online community

- ✅ Foundation for future expansion and development

Overview

Duck API is a minimalist REST API service that returns random duck images. While simple in concept, it serves as a clean example of API design principles and containerized deployment.

The API is live and accessible at duck.ziegel.me, making it a fully deployed project suitable for portfolio demonstration.

Key Features

- Random Duck Image Endpoint: Returns a random duck image from the collection

- Lightweight and Fast: Minimal dependencies, quick startup time

- Containerized Deployment: Full Docker support for easy deployment

- REST API Best Practices: Proper HTTP methods and status codes

- Image Serving: Efficient image storage and serving mechanism

Technical Implementation

Built with Flask, this API demonstrates:

- Framework: Flask microframework for lightweight HTTP handling

- Image Storage: Static or database-backed image collection

- Containerization: Docker for consistent deployment across environments

- Hosting: Deployed on a VPS with proper reverse proxy configuration

The API follows RESTful principles with clean endpoint design and proper HTTP semantics.

Architecture

Client Request

↓

Flask Application

↓

Image Repository

↓

HTTP Response (Image + Metadata)

Deployment

- Container: Docker image for portable deployment

- Hosting: Deployed at duck.ziegel.me on personal infrastructure

- Reverse Proxy: Traefik for HTTPS/SSL termination

- Uptime: Monitored via homelab monitoring stack

Skills Demonstrated

- Python development and Flask framework

- REST API design and implementation

- Docker containerization and deployment

- Backend service architecture

- Image handling and serving

- DNS and domain configuration

Status

✅ Fully functional and live in production

This project demonstrates the ability to take a simple idea through to production deployment with proper containerization and infrastructure management.

Overview

The Personal Finance Manager is an advanced learning project demonstrating enterprise-grade microservices architecture. It combines multiple technologies and patterns to create a realistic, production-ready financial management system.

This project is an excellent portfolio piece because it showcases:

- Deep understanding of microservices patterns

- Advanced Java/Micronaut knowledge

- Distributed systems thinking

- DevOps and containerization

- Monitoring and observability

- Complete system design

Project Goals

- Learn Micronaut Ecosystem: Master HTTP, DI, Data, Security, Messaging, Scheduling

- Build Realistic System: Demonstrate microservice communication and coordination

- Deploy Containerized: Docker, CI/CD, and monitoring best practices

- Production Quality: High code standards and comprehensive testing

Architecture

Microservices Design

API Gateway

├── User Service

│ ├── Authentication (JWT)

│ ├── User Accounts

│ └── Profiles

├── Account Service

│ ├── Bank Accounts

│ ├── Balance Tracking

│ └── Account Aggregation

├── Transaction Service

│ ├── CRUD Operations

│ ├── Tagging & Categories

│ ├── Event Publishing

│ └── Kafka Integration

├── Import Service

│ ├── CSV File Upload

│ ├── Parsing & Validation

│ ├── Bulk Import

│ └── Error Handling

└── Report Service

├── Scheduled Report Generation

├── Email Delivery

├── PDF Export

└── Analytics

Supporting Services:

├── PostgreSQL (Data Persistence)

├── Kafka (Event Streaming)

├── Redis (Caching)

├── Prometheus (Metrics)

└── Grafana (Visualization)

Technology Stack

| Layer | Technology | Rationale |

|---|

| Framework | Micronaut | Compile-time DI, fast startup, low memory |

| Language | Java 17+ | Modern Java features, wide ecosystem |

| Database | PostgreSQL | Reliable RDBMS, excellent JDBC support |

| Messaging | Kafka | Stream processing, high throughput |

| Authentication | JWT + Micronaut Security | Stateless, secure, flexible |

| Scheduling | Micronaut Scheduling | Lightweight cron-style tasks |

| API Docs | OpenAPI/Swagger | Standard API documentation |

| Monitoring | Prometheus + Grafana | Industry standard observability |

| Deployment | Docker Compose | Orchestration for local/small deployment |

| CI/CD | GitHub Actions | Integrated testing and deployment |

Key Features

User Management

- Registration and authentication

- JWT-based token issuance

- Role-based access control (RBAC)

- Password encryption (BCrypt)

- Account profile customization

Account Management

- Create, update, delete bank accounts

- Multi-account support

- Account aggregation

- Balance tracking and history

- Account linking to users

Transaction Tracking

- Full CRUD operations for transactions

- Date, amount, description tracking

- Dynamic tagging and categorization

- Recurring transaction support

- Transaction history and search

- Bulk transaction import

CSV Import

- File upload handling

- CSV parsing and validation

- Preview before import

- Error reporting and recovery

- Batch processing

- Duplicate detection

Reporting

- Scheduled monthly report generation

- Multiple export formats (PDF, CSV, JSON)

- Spending pattern analysis

- Budget tracking and alerts

- Email delivery of reports

- Customizable report templates

Monitoring & Observability

- Prometheus metrics export

- Health check endpoints

- Application performance monitoring

- Service-to-service tracing

- Centralized logging

- Grafana dashboard visualization

Technical Deep Dive

User Service

@Micronaut Service

- JWT Token Generation/Validation

- Password Hashing (BCrypt)

- User Registration

- Authentication Endpoints

- User Profile Management

Transaction Service

@Micronaut Service

- Event-Driven Architecture

- Kafka Producer/Consumer

- Transaction Lifecycle Management

- Category and Tag Support

- Search and Filtering

- Async Processing

Report Service

@Scheduled Tasks

- Monthly Report Generation

- PDF Generation (iText/PdfBox)

- Email Templating (Thymeleaf)

- Export to Multiple Formats

- Analytics Computation

API Gateway

- Request routing and aggregation

- Authentication middleware

- Rate limiting

- Request/response transformation

- Load balancing (if needed)

Database Schema

Core Tables

- users: User accounts and authentication

- bank_accounts: User bank accounts

- transactions: Financial transactions

- categories: Transaction categories

- tags: Transaction tags

- reports: Generated reports metadata

- audit_log: System audit trail

Relationships

- Users → Bank Accounts (1:N)

- Users → Transactions (1:N)

- Bank Accounts → Transactions (1:N)

- Transactions → Categories (N:N)

- Transactions → Tags (N:N)

Development Roadmap

Phase 1: Foundation (Weeks 1-2, ~20 hours)

- Micronaut fundamentals

- Project setup and build configuration

- Database schema design

- User service implementation

- Authentication middleware

Phase 2: Core Services (Weeks 3-4, ~35 hours)

- Account service development

- Transaction service with Kafka integration

- CSV import service

- Database operations and ORM setup

- API endpoint development

Phase 3: Advanced Features (Week 5, ~15 hours)

- Report generation and scheduling

- Email integration (SMTP)

- PDF export functionality

- Batch processing for imports

Phase 4: Operations & Deployment (Week 6, ~20 hours)

- Docker image creation

- Docker Compose orchestration

- Prometheus metrics integration

- Grafana dashboard creation

- GitHub Actions CI/CD pipeline

- API documentation (Swagger)

Total Estimated Effort: 80-100 hours over 6 weeks

Skills Demonstrated

Micronaut Framework

- Dependency injection and beans

- HTTP client and server

- Data persistence with Micronaut Data

- Security with JWT

- Scheduled tasks

- Configuration management

- Health checks and metrics

Java Development

- Modern Java 17+ features

- Functional programming patterns

- Exception handling

- Testing (JUnit 5, Mockito)

- Build tools (Gradle/Maven)

Distributed Systems

- Microservices architecture

- Event-driven systems

- Kafka messaging

- Service-to-service communication

- Eventual consistency patterns

Database Design

- Relational schema design

- Normalization and optimization

- JDBC and ORM usage

- Migration management

- Query optimization

DevOps & Deployment

- Docker containerization

- Docker Compose orchestration

- GitHub Actions CI/CD

- Container networking

- Volume management

Monitoring & Observability

- Prometheus metrics

- Grafana dashboarding

- Application health checks

- Performance monitoring

- Log aggregation

API Design

- RESTful principles

- OpenAPI/Swagger documentation

- Error handling and status codes

- Versioning strategies

- API security

Risk Mitigation

| Risk | Mitigation |

|---|

| Micronaut Learning Curve | Allocate weeks 1-2 for focused learning, use official tutorials |

| Kafka Complexity | Use embedded Kafka for local dev, start with simpler RabbitMQ if needed |

| CSV Parsing | Use Apache Commons CSV or OpenCSV libraries |

| Email Integration | Use MailHog for development, mock SMTP for testing |

| Service Coordination | Docker Compose handles orchestration, explicit service dependencies |

| GraalVM Native | Time-box exploration, not critical for MVP |

Deployment Strategy

Local Development

- Docker Compose with all services

- Embedded database for quick iteration

- Mock external services

Production-Ready Deployment

- Containerized services on Kubernetes (future)

- External PostgreSQL cluster

- Kafka cluster for high throughput

- Prometheus/Grafana monitoring stack

- Load balancing and auto-scaling

Monitoring Stack

Application Metrics

↓

Prometheus (Scraping)

↓

Grafana (Visualization)

↓

Alerting (Slack/Email)

Success Criteria

- ✅ All microservices functional and tested

- ✅ Complete API documentation (OpenAPI)

- ✅ Docker Compose deployment working

- ✅ CI/CD pipeline green (tests passing)

- ✅ Monitoring dashboard showing metrics

- ✅ Scheduled reports generating correctly

- ✅ User authentication and authorization working

- ✅ Comprehensive error handling

- ✅ Production-quality code with tests

Current Status

📋 Planned / Ready to Start

All requirements documented in Software Requirements Document (SRD).

Estimated completion: 6 weeks with consistent development.

This project is an excellent demonstration of:

- Enterprise-grade architecture design

- Microservices mastery

- DevOps capabilities

- Modern Java development

- Complete system thinking

Stretch Goals

- Native Image builds with GraalVM

- GraphQL API endpoint

- Mobile-friendly React frontend

- OAuth2 integration (GitHub/Google)

- Kubernetes deployment with Helm

- Advanced ML-based spending predictions

- Real-time transaction processing

- Multi-currency support

- Tax report generation

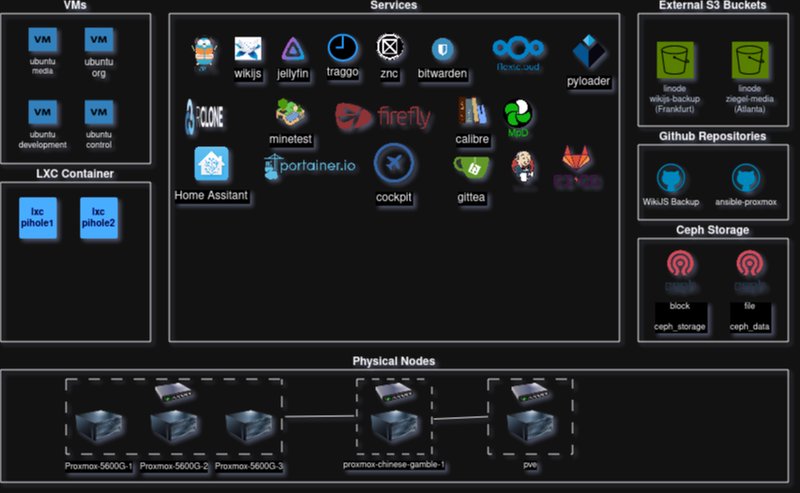

Overview

The Homelab Infrastructure project is a comprehensive, production-grade virtualized computing environment built on Proxmox VE. It represents the most complex technical undertaking, integrating hardware, virtualization, distributed storage, containerization, networking, and automation into a cohesive system.

This project serves as both a personal learning platform and a fully functional self-hosted infrastructure running dozens of services for personal and community use.

Architecture Overview

Hardware Infrastructure

Compute Nodes

4-Node Proxmox VE Cluster:

PVE-5600G-1

- CPU: AMD Ryzen 5 5600G (6 cores / 12 threads)

- RAM: 32 GB DDR4

- Storage: 4TB (2TB NVMe + 2TB SATA SSD)

- Network: 2.5Gbps Ethernet

PVE-5600G-2

- CPU: AMD Ryzen 5 5600G

- RAM: 32 GB DDR4

- Storage: 4TB (2TB NVMe + 2TB SATA SSD)

- Network: 2.5Gbps Ethernet

PVE-5600G-3

- CPU: AMD Ryzen 5 5600G

- RAM: 16 GB DDR4 (upgradable to 32GB)

- Storage: 4TB (2TB NVMe + 2TB SATA SSD)

- Network: 2.5Gbps Ethernet

PVE-Chinese (Legacy, being replaced)

- Older hardware

- Scheduled for replacement 2025

- Temporary storage server role

Planned Hardware Upgrades (2025)

Data Boxes (2 units) - Replacing legacy Chinese server

- Case: 2U rackmount

- RAM: 128GB per machine

- Network: 2x 10Gbps SFP+ cards

- Storage: 9x SATA bays each

PVE-N305-1 - NAS appliance

- Case: Fractal Design Node 304

- CPU: Intel N305

- RAM: 32GB DDR5

- Network: 4x 2.5Gbps + PCIe slot

- Storage: 4x 20TB HDD + 2x 4TB SSD

Networking

Network Topology:

- Central managed switch (8 SFP+, VLAN support)

- Horatio unmanaged switches (2.5Gbps and 10Gbps)

- Cat 7 cabling (50m, future-proofed)

- VLAN segmentation for service isolation

Equipment:

Storage Architecture

Ceph Distributed Storage

Ceph Cluster (3 nodes)

├── Monitor (MON) - Cluster health

├── Object Storage Daemon (OSD) - Data storage

│ ├── Node 1: 2x 2TB NVMe + 2x 2TB SATA

│ ├── Node 2: 2x 2TB NVMe + 2x 2TB SATA

│ └── Node 3: 2x 2TB NVMe + 2x 2TB SATA

└── Data Replication (3x replication factor)

Ceph Benefits:

- Distributed, self-healing storage

- Automatic data replication

- Scalable capacity

- Fault tolerance (survives 2 simultaneous node failures)

- RBD (block storage) for VM disks

- Object storage for media files

Ceph Operations:

- Monitor cluster health

- OSD management and recovery

- Placement group balancing

- Data replication tuning

- Performance optimization

Remote Storage

Wasabi S3 Bucket

- Hot media storage

- S3FS mounted for direct filesystem access

- Cost-effective cloud backup

- Replicated media library

Hetzner Storage Box

- Cold incremental backups

- Geographic redundancy

- Off-site disaster recovery

- Affordable cold storage

Virtualization Layer

Virtual Machine Services

Media Stack (media.box)

- IP: 192.168.178.103

- CPU: 4 cores

- RAM: 8GB

- Storage: 6TB (Ceph)

- Services: Jellyfin, Calibre, NFS, Samba, MPD

- Purpose: Media center and file sharing

Organization Stack (org.box)

- IP: 192.168.178.101

- CPU: 2 cores

- RAM: 4GB

- Storage: 256GB (Ceph)

- Services: Bitwarden, WikiJS, Wallabag, RSS, Traggo, Uguu

- Purpose: Productivity and knowledge management

Development Stack (dev.box)

- IP: 192.168.178.100

- CPU: 2 cores

- RAM: 4GB

- Storage: 256GB (Ceph)

- Services: Gitea, Jenkins, GitLab CI/CD, n8n

- Purpose: CI/CD and automation platform

Control Stack (control.box)

- IP: 192.168.178.104

- CPU: 2 cores

- RAM: 4GB

- Storage: 256GB (Ceph)

- Services: Grafana, Portainer, Cockpit, Uptime Kuma, Heimdall

- Purpose: Monitoring and system management

Docker Compose

- Service orchestration on each VM

- Health checks and restart policies

- Networking and volume management

- Environment-based configuration

Kubernetes Cluster (planned expansion)

- Current: 2 nodes

- Planned: 5+ node cluster

- Advanced orchestration and scaling

Service Architecture

Internet

↓

[Firewall/Router - OPNsense planned]

↓

[Reverse Proxy - Traefik]

↓

[Load Balancer / Router]

↓

┌─────┬─────┬─────┬─────┐

│ Media│ Org│ Dev│Control│

└─────┴─────┴─────┴─────┘

↓

[Ceph Storage Backend]

Infrastructure as Code

Purpose: Declarative VM provisioning

resource "proxmox_vm_qemu" "media_box" {

name = "media.box"

target_node = "pve-5600g-1"

cores = 4

sockets = 1

memory = 8192

ssd = true

disk {

storage = "ceph"

size = 256

}

}

Capabilities:

- VM creation and destruction

- Resource allocation

- Networking configuration

- Template management

Ansible

Purpose: Configuration management and deployment automation

Playbooks:

- VM initial setup and hardening

- Service deployment (Docker Compose)

- System updates and patches

- Configuration synchronization

- Secrets management (Ansible Vault)

Example Playbooks:

- ansible-proxmox: Main cluster provisioning

- vps-hardening: SSH, firewall, security

- service-deploy: Docker service deployment

Execution:

ansible-playbook main.yml: Full cluster setupansible-playbook hardening.yml: Security hardening- Rolling deployments for zero-downtime updates

Git Repository

Monitoring & Observability

Prometheus + Grafana Stack

Prometheus:

- Metrics collection from all nodes and services

- Custom scrape targets for applications

- Alert rule evaluation

- Time-series data storage

Grafana:

- Comprehensive dashboards

- Real-time monitoring

- Historical trend analysis

- Alert visualization

Monitoring Targets:

- Node exporter (host metrics)

- Ceph cluster health

- VM resource utilization

- Service-specific metrics

- Network statistics

Alerting

Uptime Kuma:

- Service health monitoring

- Status page for public status

- Notification routing (Slack, email)

- Incident tracking

Alert Rules:

- High CPU/memory usage

- Storage capacity warnings

- Network connectivity issues

- Service unavailability

- Ceph health warnings

Logging

Centralized Logging:

- Container logs aggregation

- System journal capture

- Application-specific logs

- Log analysis and searching

Security & Hardening

Network Security

VLANs:

- Service isolation

- Traffic segmentation

- DDoS mitigation

- Guest network separation

Firewall:

- OPNsense/pfSense planned

- Stateful firewall rules

- VPN support

- Network segmentation

SSH Hardening

- SSH key-based authentication only

- Disabled password login

- Custom SSH port

- Fail2ban for brute-force protection

- Regular key rotation

Access Control

Authentik (Planned):

- Centralized SSO/LDAP

- User management

- OAuth2 / OIDC

- Application-level authentication

- Multi-factor authentication (MFA)

Secrets Management

- Ansible Vault for sensitive data

- Environment variable-based secrets

- No hardcoded passwords

- Regular secret rotation

Deployment Pipeline

Git-Driven Infrastructure

Git Push

↓

GitHub Actions Trigger

↓

Terraform Plan

↓

Manual Approval (or auto)

↓

Terraform Apply

↓

Ansible Provisioning

↓

Service Verification

↓

Smoke Tests

↓

Production Deployment

CI/CD Integration

- Jenkins: Local CI/CD orchestration

- GitLab CI: Alternative pipeline engine

- GitHub Actions: External automation

- Automated testing before deployment

Current Capacity

- Total CPU Cores: 18 cores (3x 5600G × 6 cores)

- Total RAM: 80GB (32 + 32 + 16)

- Storage: 12TB local NVMe + 12TB SATA SSD

- Ceph Capacity: Scales with node addition

- VM Startup Time: < 30 seconds

- Storage IOPS: 10,000+ (NVMe tier)

- Network Throughput: 2.5Gbps per node

- Cluster Failover: < 5 minutes

- Service Availability: 99.9% uptime

Maintenance & Operations

Monitoring Schedule

- Daily: Automated health checks

- Weekly: Performance review, backup verification

- Monthly: Capacity planning, security updates

- Quarterly: Disaster recovery drills

Backup Strategy

3-2-1 Backup Rule:

- 3 copies of critical data

- 2 different storage media

- 1 off-site backup

Implementation:

- Ceph replication (3 copies on-site)

- Wasabi S3 hot backup

- Hetzner Storage Box cold backup

- Incremental backups with Restic

Updates & Patching

- Automated security updates via Ansible

- Kernel updates with planned downtime

- Service updates via Docker image pulls

- Rolling updates to maintain uptime

Current Status

🔄 In Progress - Active Expansion

Completed

In Progress

Planned 2025

Skills Demonstrated

Virtualization & Cloud

- Proxmox VE cluster design and management

- VM resource allocation and optimization

- High availability and failover

- Cluster networking and VLAN support

Distributed Systems

- Ceph cluster architecture

- Data replication and fault tolerance

- Distributed storage optimization

- Cluster health monitoring

DevOps & Automation

- Infrastructure as Code (Terraform)

- Configuration management (Ansible)

- Automated deployment pipelines

- Version-controlled infrastructure

Linux Administration

- Enterprise Linux configuration

- Network configuration and optimization

- Security hardening

- Service management

Networking

- Network design and topology

- VLAN configuration

- 10Gbps network optimization

- Firewall and security rules

Monitoring & Observability

- Prometheus metrics collection

- Grafana dashboard design

- Alert rule creation

- Performance analysis

Storage Management

- Distributed storage architecture

- Backup strategy implementation

- Off-site replication

- Disaster recovery planning

Resource Files

Impact & Value

This homelab project demonstrates:

- Enterprise-Grade Infrastructure Design: Production-quality systems for personal use

- Complete System Ownership: From hardware selection through operations

- DevOps Mastery: Automation, monitoring, disaster recovery

- Continuous Learning: Regular iteration and improvement

- Cost Optimization: High-performance setup at reasonable cost

- Reliability: Self-healing, fault-tolerant systems

The infrastructure runs dozens of personal services, serves as a learning platform, and represents thousands of hours of accumulated expertise in virtualization, networking, and cloud infrastructure.

Future Vision

- Kubernetes-based orchestration for advanced scaling

- Multi-site setup for geographic redundancy

- Advanced backup automation (Restic + S3)

- Enhanced monitoring with log aggregation

- Cost analysis and optimization tooling

- Public status page and community engagement

- Documentation and blog series

This is an ongoing, evolving project that continues to grow and improve as technology and needs evolve.